VOD Infrastructure Evolution

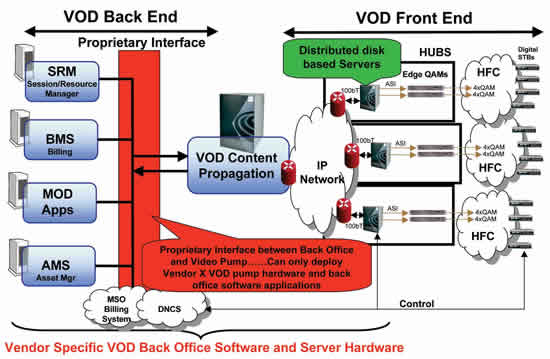

Video on demand (VOD) was embraced and introduced by the cable community in the late 1990s to differentiate its video product offering from that of the direct to home satellite community. Utilizing the unique ability of the hybrid fiber/coax (HFC) infrastructure to target small groups of homes via narrowcasting and RF spectrum re-use, cable operators launched on-demand video services to provide a differentiated and unique video service offering and ultimately reduce subscriber churn and increase digital subscriber penetration. First-gen Early deployments of VOD service were primarily built as a nonlinear extension to the existing pay-per-view (PPV) model for movie content. As such, the PPV service model transitioned from a broadcast service to a unicast service enabling per-subscriber interactivity with network-based video content. This early VOD model was used to provide subscribers with access to a small library of feature films and adult material, resulting in the cable operator’s need to support a few hundred hours of movie titles with simple content rules and concurrent usage rates in the 1-3 percent range. The infrastructure deployed to support these early deployments included proprietary back office software systems for asset management, resource management and billing as well as proprietary interfaces to disk-based computer hardware systems used for content ingest, storage and streaming functions. These early VOD server deployments were primarily based on the DVB-ASI transport protocol for streaming functions with a fixed and static topology between the disk-based VOD server and associated RF edge quadrature amplitude modulation (QAM) devices. The digital transport network, dominated in the late 1990s by synchronous optical network (SONET), asynchronous transfer mode (ATM) and proprietary video transport systems, was cost prohibitive and not well-suited to the transport of native DVB-ASI. At the time, many cable operators enabled early VOD services by utilizing a highly distributed architecture with VOD servers located at the majority of network hubs. An example of this architecture is displayed in Figure 1.  Over the last few years, cable operators have built upon their early on-demand success. In an effort to further differentiate their service offering and increase digital subscriber penetration, cable operators began augmenting the early VOD service that was primarily used for standard definition (SD) movie content only. This involved the addition of high definition TV (HDTV) content, as well as subscription and free-based access to larger libraries of video assets including older movies, sitcom episodes and special interest content. The result was significantly larger libraries of content (1,500-3,000 hours) with more complex content rules, higher concurrent usage rates (8-10 percent), and greatly increased content turnover/ingest requirements. Ultimately, cable operators have enjoyed further business success, increased service differentiation, reduced churn, higher revenues and increased digital subscriber penetration. In order to support the expanded VOD service offering, cable operators have endured expensive upgrades to their early infrastructures. Early technology solutions suffered from density and scalability limitations, extremely high power consumption, limited and troublesome content propagation and ingest performance, high costs associated with storage duplication at multiple locations, and the never-ending load balancing of service groups across point-to-point static DVB-ASI connections. These items, combined with the operational complexity of managing several copies of large content libraries across distributed server complexes, have resulted in a move to a more centralized VOD hardware infrastructure. This approach improves mean time to repair (MTTR), decreases operational cost/complexity, and also prepares cable operators for the next leap in on-demand viewing growth. Centralized, GigE-based Centralization of VOD server systems has largely been enabled by the emergence of low cost, Gigabit Ethernet (GigE)-based, wavelength division multiplexing (WDM) optical transport systems. These transport networks, when combined with wire speed layer 2/layer 3 Internet protocol (IP) switches and native IP/GigE VOD servers, allow for dynamic data capacity and throughput sharing across multiple server ports, blades and complexes. As a result, many operators have begun using user datagram protocol (UDP)/IP/GigE as the primary transport protocol for VOD. Although 10GigE has also emerged, it has yet to be widely deployed as a VOD server streaming interface. In many deployments, 10GigE decreases the redundancy model of the centralized VOD infrastructure, which causes concerns for some operators. Over time, 10GigE use for VOD streaming server interfaces can be expected to expand and increase streaming densities and reduce end-to-end network costs. Figure 2 displays a dual-homed, centralized VOD server architecture with IP/GigE as the fundamental transport protocol for streaming functions. This type of architecture has gained momentum over the past year.

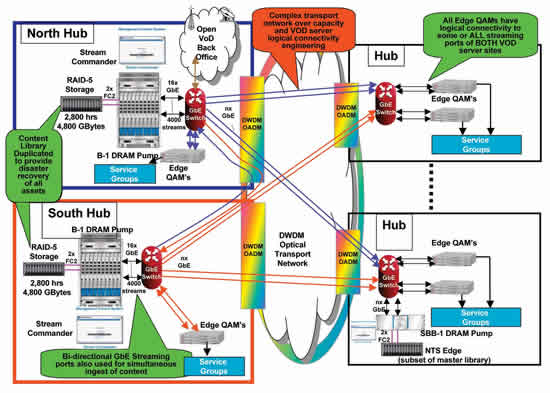

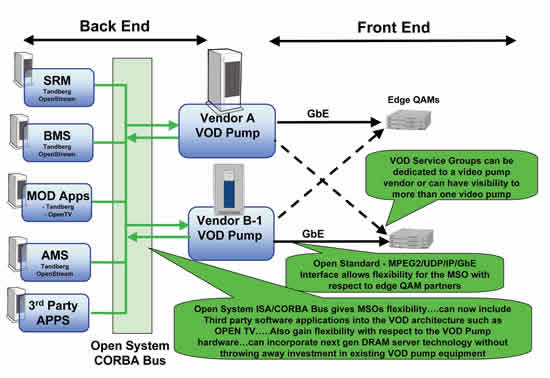

Over the last few years, cable operators have built upon their early on-demand success. In an effort to further differentiate their service offering and increase digital subscriber penetration, cable operators began augmenting the early VOD service that was primarily used for standard definition (SD) movie content only. This involved the addition of high definition TV (HDTV) content, as well as subscription and free-based access to larger libraries of video assets including older movies, sitcom episodes and special interest content. The result was significantly larger libraries of content (1,500-3,000 hours) with more complex content rules, higher concurrent usage rates (8-10 percent), and greatly increased content turnover/ingest requirements. Ultimately, cable operators have enjoyed further business success, increased service differentiation, reduced churn, higher revenues and increased digital subscriber penetration. In order to support the expanded VOD service offering, cable operators have endured expensive upgrades to their early infrastructures. Early technology solutions suffered from density and scalability limitations, extremely high power consumption, limited and troublesome content propagation and ingest performance, high costs associated with storage duplication at multiple locations, and the never-ending load balancing of service groups across point-to-point static DVB-ASI connections. These items, combined with the operational complexity of managing several copies of large content libraries across distributed server complexes, have resulted in a move to a more centralized VOD hardware infrastructure. This approach improves mean time to repair (MTTR), decreases operational cost/complexity, and also prepares cable operators for the next leap in on-demand viewing growth. Centralized, GigE-based Centralization of VOD server systems has largely been enabled by the emergence of low cost, Gigabit Ethernet (GigE)-based, wavelength division multiplexing (WDM) optical transport systems. These transport networks, when combined with wire speed layer 2/layer 3 Internet protocol (IP) switches and native IP/GigE VOD servers, allow for dynamic data capacity and throughput sharing across multiple server ports, blades and complexes. As a result, many operators have begun using user datagram protocol (UDP)/IP/GigE as the primary transport protocol for VOD. Although 10GigE has also emerged, it has yet to be widely deployed as a VOD server streaming interface. In many deployments, 10GigE decreases the redundancy model of the centralized VOD infrastructure, which causes concerns for some operators. Over time, 10GigE use for VOD streaming server interfaces can be expected to expand and increase streaming densities and reduce end-to-end network costs. Figure 2 displays a dual-homed, centralized VOD server architecture with IP/GigE as the fundamental transport protocol for streaming functions. This type of architecture has gained momentum over the past year.  Video server equipment is located at two VOD headend sites. Each server “home” has access, via the IP/GigE/WDM transport infrastructure, to every remote hub. Content is propagated via the installed IP network to the two VOD servers. The content library is duplicated at the two geographically disparate server sites to provide full disaster recovery. It also affords server chassis redundancy because both VOD server chassis have visibility to all remote VOD hub sites. The operator can perform software upgrades on one server location at a time, thereby maintaining 100 percent service uptime during software maintenance windows. Each VOD server site is equipped to provide 50-100 percent of the total required system streaming capacity. Hence, if a single server site goes down or loses connectivity to the network, the remaining site can accommodate 50-100 percent of the entire network’s peak streaming requirement. This number can be adjusted at each site with differences in initial end-to-end network cost. From an IP connectivity perspective, all attached edge QAM devices have logical connectivity to all streaming ports of both server sites via protected or unprotected WDM GigE connections to the VOD GigE switches (at each of the VOD server headends). The network is configured with “any edge QAM device to any server port” logical connectivity with dynamic throughput sharing of total server streaming throughput and ports. This architecture requires careful optical network traffic engineering because each dense WDM (DWDM) GigE path (from a single server site to each hub) may represent only 50 percent of that hub’s required capacity at peak streaming times. Transport network capacity engineering must be clearly understood because 100 percent of any hub’s on-demand traffic could attempt to utilize the available 50 percent of transport capacity (to a single server site) in the event of a path/network failure. To provide true 100 percent system streaming redundancy, the transport network would require 100 percent overcapacity such that the data path to each hub (from a single server site) would have the capacity to provide 100 percent of streaming capacity for that particular hub. To alleviate this problem, some cable operators remove the GigE switches at the remote hubs and configure the network such that only half of any hub’s edge QAM devices have logical connectivity to one of the two server sites to avoid over-subscription of the transport network. Open system architectures There’s also demand for an open systems software approach to the back office. The move to an open systems back office VOD software system began in 2001, when John Callahan of Time Warner Cable drove the development of the Interactive Services Architecture (ISA). ISA defines an open systems back office framework for interactive video deployments and was brought to reality by the N2 Broadband (now Tandberg) Open Stream family of products. This enabled TWC to assemble solutions where the video streaming server and back office software components were interchangeable and operated with an open set of software application programming interfaces (APIs). It also allowed TWC to deploy and integrate third-party interactive video services/applications. Comcast’s Next Generation On Demand (NGOD) initiative is similar to TWC’s ISA architecture. NGOD provides an open systems framework for multi-vendor interoperability within the end-to-end interactive, on demand video architecture. Charter Communications has also stated its intentions to move from proprietary infrastructures. Just as with high-speed data, proprietary systems were “a means to an end in the beginning”. The multi-vendor, open systems DOCSIS approach is increasingly prevalent in high-speed data, and the same appears to be happening in the VOD hardware and software segment. An example of an open systems VOD architecture is displayed in Figure 3.

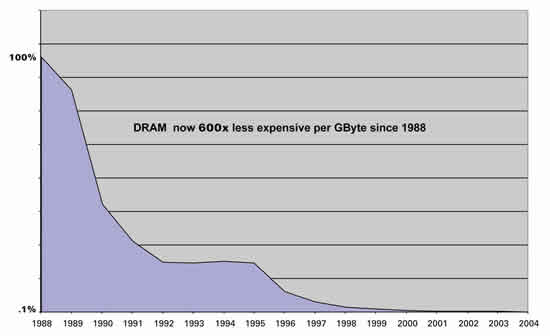

Video server equipment is located at two VOD headend sites. Each server “home” has access, via the IP/GigE/WDM transport infrastructure, to every remote hub. Content is propagated via the installed IP network to the two VOD servers. The content library is duplicated at the two geographically disparate server sites to provide full disaster recovery. It also affords server chassis redundancy because both VOD server chassis have visibility to all remote VOD hub sites. The operator can perform software upgrades on one server location at a time, thereby maintaining 100 percent service uptime during software maintenance windows. Each VOD server site is equipped to provide 50-100 percent of the total required system streaming capacity. Hence, if a single server site goes down or loses connectivity to the network, the remaining site can accommodate 50-100 percent of the entire network’s peak streaming requirement. This number can be adjusted at each site with differences in initial end-to-end network cost. From an IP connectivity perspective, all attached edge QAM devices have logical connectivity to all streaming ports of both server sites via protected or unprotected WDM GigE connections to the VOD GigE switches (at each of the VOD server headends). The network is configured with “any edge QAM device to any server port” logical connectivity with dynamic throughput sharing of total server streaming throughput and ports. This architecture requires careful optical network traffic engineering because each dense WDM (DWDM) GigE path (from a single server site to each hub) may represent only 50 percent of that hub’s required capacity at peak streaming times. Transport network capacity engineering must be clearly understood because 100 percent of any hub’s on-demand traffic could attempt to utilize the available 50 percent of transport capacity (to a single server site) in the event of a path/network failure. To provide true 100 percent system streaming redundancy, the transport network would require 100 percent overcapacity such that the data path to each hub (from a single server site) would have the capacity to provide 100 percent of streaming capacity for that particular hub. To alleviate this problem, some cable operators remove the GigE switches at the remote hubs and configure the network such that only half of any hub’s edge QAM devices have logical connectivity to one of the two server sites to avoid over-subscription of the transport network. Open system architectures There’s also demand for an open systems software approach to the back office. The move to an open systems back office VOD software system began in 2001, when John Callahan of Time Warner Cable drove the development of the Interactive Services Architecture (ISA). ISA defines an open systems back office framework for interactive video deployments and was brought to reality by the N2 Broadband (now Tandberg) Open Stream family of products. This enabled TWC to assemble solutions where the video streaming server and back office software components were interchangeable and operated with an open set of software application programming interfaces (APIs). It also allowed TWC to deploy and integrate third-party interactive video services/applications. Comcast’s Next Generation On Demand (NGOD) initiative is similar to TWC’s ISA architecture. NGOD provides an open systems framework for multi-vendor interoperability within the end-to-end interactive, on demand video architecture. Charter Communications has also stated its intentions to move from proprietary infrastructures. Just as with high-speed data, proprietary systems were “a means to an end in the beginning”. The multi-vendor, open systems DOCSIS approach is increasingly prevalent in high-speed data, and the same appears to be happening in the VOD hardware and software segment. An example of an open systems VOD architecture is displayed in Figure 3.  Solid state servers Computer hard drives were designed for two basic functions in standard computer platforms: asset storage and infrequent reading/writing of content. They were not designed to spin 24/7 and pump high bit rate video. Many first-generation VOD systems rely on hard disks for both streaming and storage in a tightly coupled fashion. Replacement of failed hard drives on VOD server platforms has become both an operational and service availability concern for many cable operators. As illustrated in Figure 4, solid state dynamic random access memory (DRAM) prices have decreased by 600 times on a per gigabyte basis over the past 17 years. This trend is causing a shift in the construction of VOD server platforms.

Solid state servers Computer hard drives were designed for two basic functions in standard computer platforms: asset storage and infrequent reading/writing of content. They were not designed to spin 24/7 and pump high bit rate video. Many first-generation VOD systems rely on hard disks for both streaming and storage in a tightly coupled fashion. Replacement of failed hard drives on VOD server platforms has become both an operational and service availability concern for many cable operators. As illustrated in Figure 4, solid state dynamic random access memory (DRAM) prices have decreased by 600 times on a per gigabyte basis over the past 17 years. This trend is causing a shift in the construction of VOD server platforms.  The same revolution happened with time division multiplexing (TDM) phone switch hardware. The four-bit microprocessor emerged in 1969 and caused a solid state revolution in the construction of TDM voice switches in the mid 1970s. This resulted in a move away from step relays with multiple moving parts. History is now repeating itself in the VOD server market. With the continuing drop in DRAM prices, VOD servers that utilize DRAM technology have emerged and are being deployed. These systems are de-coupled from a streaming and storage perspective and enable independent scaling of these parameters. These server systems eliminate the need to purchase storage when only increased streaming capacity is required (and vise versa). The result is that power-hungry hard drives are significantly reduced in numbers and are again used for what they were designed for—asset storage and infrequent content paging. The design goal of second-generation server platforms is to reduce cost through the use of commodity technologies, increase reliability, and reduce power consumption through reduction of moving parts/motors in the overall VOD server system. Everything on demand The next leap appears to be toward an “everything on demand,” all-digital viewing environment. In this model, most TV content and syndicated programming is available on demand. This model entails complex syndication and advertising rules and massive concurrent usage rates. VOD content libraries could grow to tens of thousands of hours. Additionally, the ability of the infrastructure to support massive real-time ingest and simultaneous streaming in a carrier class fashion becomes paramount. In preparation for the all digital, everything on demand future, many cable operators have upgraded, or are upgrading, their optical transport networks for native GigE support. Many are also upgrading VOD hardware infrastructure and moving toward open systems back office software. In addition, new GigE based, open system video server technologies have emerged. These server technologies provide separation, and independent scaling, of streaming and storage and are now being deployed in centralized architectures. These server technologies and associated architectures provide a means and common platform to support current forms of VOD as well as network-based personal video recorder (PVR) and other on demand services of tomorrow. Christopher Skarica is VP of technical sales for Broadbus Technologies. Reach him at cskarica@broadbus.com.

The same revolution happened with time division multiplexing (TDM) phone switch hardware. The four-bit microprocessor emerged in 1969 and caused a solid state revolution in the construction of TDM voice switches in the mid 1970s. This resulted in a move away from step relays with multiple moving parts. History is now repeating itself in the VOD server market. With the continuing drop in DRAM prices, VOD servers that utilize DRAM technology have emerged and are being deployed. These systems are de-coupled from a streaming and storage perspective and enable independent scaling of these parameters. These server systems eliminate the need to purchase storage when only increased streaming capacity is required (and vise versa). The result is that power-hungry hard drives are significantly reduced in numbers and are again used for what they were designed for—asset storage and infrequent content paging. The design goal of second-generation server platforms is to reduce cost through the use of commodity technologies, increase reliability, and reduce power consumption through reduction of moving parts/motors in the overall VOD server system. Everything on demand The next leap appears to be toward an “everything on demand,” all-digital viewing environment. In this model, most TV content and syndicated programming is available on demand. This model entails complex syndication and advertising rules and massive concurrent usage rates. VOD content libraries could grow to tens of thousands of hours. Additionally, the ability of the infrastructure to support massive real-time ingest and simultaneous streaming in a carrier class fashion becomes paramount. In preparation for the all digital, everything on demand future, many cable operators have upgraded, or are upgrading, their optical transport networks for native GigE support. Many are also upgrading VOD hardware infrastructure and moving toward open systems back office software. In addition, new GigE based, open system video server technologies have emerged. These server technologies provide separation, and independent scaling, of streaming and storage and are now being deployed in centralized architectures. These server technologies and associated architectures provide a means and common platform to support current forms of VOD as well as network-based personal video recorder (PVR) and other on demand services of tomorrow. Christopher Skarica is VP of technical sales for Broadbus Technologies. Reach him at cskarica@broadbus.com.