Switched Digital Video

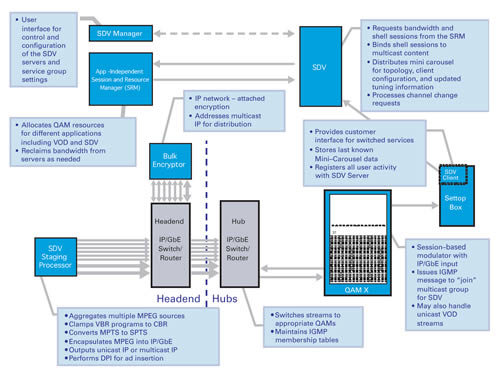

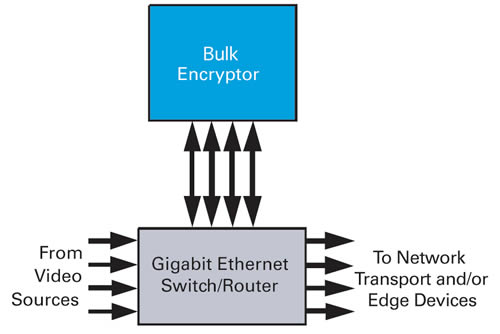

Switched digital video (SDV) technology promises to change fundamentally how digital video is delivered over cable networks, enabling cable operators to offer consumers a wider variety of programming while effectively managing HFC network bandwidth. Although the prospect of delivering SDV services over an end-to-end Internet protocol (IP) network is an attractive long-term goal for cable operators, it is unrealistic to expect to realize this infrastructure without addressing the existing installed base of some 40 million MPEG set-tops that cannot decode a DOCSIS or IP stream. For this reason, during the past several years cable operators have been experimenting with delivery of SDV services over MPEG-based switching networks. A key hurdle that has limited the deployment of SDV services has been the absence of an open architecture that offers the system availability, scalability, performance and cost required to enable the wide-scale rollout of SDV over HFC networks to the existing installed base of MPEG-based set-tops. Over the past year, industry leaders have collaboratively developed a new, open SDV architecture. Discussed in the text that follows, this architecture contains the following major elements: staging processor, bulk encryptor, content routing network, edge-QAM (quadrature amplitude modulation) modulator, session and resource manager, SDV server, SDV manager and SDV client. (See Figure 1.) For more information on key SDV interfaces and features, please see the longer paper from which this article was drawn.  Staging processor In a typical headend, content is received from multiple sources, including satellite, terrestrial over-the-air broadcast, fiber transport, storage media, and IP data networks. The receiving equipment for these sources uses various physical connections and interfaces. The staging processor performs the following functions to groom sources for input to the SDV system: – Translates source interfaces to user datagram protocol (UDP)/IP encapsulated packets carried in Ethernet frames – Converts any variable bit rate (VBR) streams to clamped, or constant bit rate (CBR), streams and for some content may convert one bit rate to another – Converts multi-program transport streams (MPTSs) to single-program transport streams (SPTSs) – May perform digital program insertion (DPI) to insert local ads into the source content – May perform segmentation of program streams into separate events for storage on a media subsystem The output of the staging processor may be unicast or multicast IP. In the case of encrypted streams, the staging processor outputs a unicast IP stream bound for the bulk encryptor. In the case of an SDV stream for which encryption is not required, the staging processor may output a multicast stream directly into the switching network. Bulk encryptor Shared by multiple viewers, SDV streams are treated as broadcast streams for purposes of encryption. Since the encryption device is not aware of, and does nothing unique for, individual viewers, encryption of SDV streams is most effectively centralized in the headend rather than integrated into edge modulators and is most efficiently done with an IP network-attached bulk encryptor. The bulk encryptor is connected to a switch-router using Gigabit Ethernet (GigE) ports in bidirectional mode as shown in Figure 2. In this application, the edge QAM modulators are connected to the routed network either directly or remotely through other network and transport equipment. Clear data is sent to the bulk encryptor for encryption, and encrypted data is sent back to the network for distribution.

Staging processor In a typical headend, content is received from multiple sources, including satellite, terrestrial over-the-air broadcast, fiber transport, storage media, and IP data networks. The receiving equipment for these sources uses various physical connections and interfaces. The staging processor performs the following functions to groom sources for input to the SDV system: – Translates source interfaces to user datagram protocol (UDP)/IP encapsulated packets carried in Ethernet frames – Converts any variable bit rate (VBR) streams to clamped, or constant bit rate (CBR), streams and for some content may convert one bit rate to another – Converts multi-program transport streams (MPTSs) to single-program transport streams (SPTSs) – May perform digital program insertion (DPI) to insert local ads into the source content – May perform segmentation of program streams into separate events for storage on a media subsystem The output of the staging processor may be unicast or multicast IP. In the case of encrypted streams, the staging processor outputs a unicast IP stream bound for the bulk encryptor. In the case of an SDV stream for which encryption is not required, the staging processor may output a multicast stream directly into the switching network. Bulk encryptor Shared by multiple viewers, SDV streams are treated as broadcast streams for purposes of encryption. Since the encryption device is not aware of, and does nothing unique for, individual viewers, encryption of SDV streams is most effectively centralized in the headend rather than integrated into edge modulators and is most efficiently done with an IP network-attached bulk encryptor. The bulk encryptor is connected to a switch-router using Gigabit Ethernet (GigE) ports in bidirectional mode as shown in Figure 2. In this application, the edge QAM modulators are connected to the routed network either directly or remotely through other network and transport equipment. Clear data is sent to the bulk encryptor for encryption, and encrypted data is sent back to the network for distribution.  For encrypted switched streams, the bulk encryptor receives IP unicasts and generates and sources the IP multicasts. It may also be used to generate multicasts for unencrypted streams to simplify network management, if desired. The bulk encryptor is implemented as a network attached device, so it is possible to migrate some or all bulk encryption to the network edge in order to accommodate future ad-insertion zones, without significant impact to the network design. Content routing network The actual switching in the SDV switched multicast system occurs in a standards-based layer 3 routed network. Multimedia streams output by the staging processor are input to the routed network as SPTS IP multicasts or SPTS unicasts if bound for a bulk encryptor. Besides multimedia content, the content routing network may also carry IP multicast streams containing "mini-carousel" data, including server addresses and tuning information, which are generated by the SDV server. Since mini-carousel data is likely to be delivered in-band on the narrowcast QAM signals to each service group, it is necessary that the SDV server be connected to the content routing network. The network, or at least the edge switch-router, must support version 3 of the Internet Group Management Protocol (IGMPv3). This is especially important for source redundancy. Edge QAM modulator As in all HFC systems, the QAM modulator enables the transmission of a multiplex of digital streams via an RF carrier in an HFC spectrum channel. The digital streams may be composed of strictly MPEG transport packets or could contain IP packets as do QAM streams used for DOCSIS cable modem termination systems (CMTSs). The SDV system uses the QAM modulator to request (join) and terminate (leave) IP multicasts and to transmit programs as MPEG transport packets in RF. IP encapsulation is not used in the RF output of the QAM modulator. Note that because IP multicast is used in the "core" of the SDV network, it is a straightforward extension of the system to continue IP transport to the end user. This can be accomplished through a CMTS in HFC access networks or through another type of edge device for other access technologies. In the SDV system described here, the QAM modulator interfaces to an element manager for provisioning and control. Provisioning sets the output frequencies, modulation type and transport stream identifiers (TSIDs) on each output carrier and IP addresses of control and content ports. A control system or session and resource manager (SRM), which may be the same as the provisioning system, allocates QAM spectrum to various applications such as video on demand (VOD) and SDV. Spectrum may be statically or dynamically allocated to the applications on the basis of entire QAM carriers or even within QAM carriers. For any QAM carrier to be used for SDV, the modulator must support session-based input-to-output stream mapping, as opposed to table-based mapping. Upon request of the SDV server, the SRM allocates a number of "shell sessions" each with a session identifier (sessionID), a nominal bandwidth (capacity or throughput) and an RF carrier assignment. Content multicast address, program number and actual bandwidth are not yet specified at this point; hence the name "shell" session, implying that this session is an empty reservoir or pipe to be filled with content at a later time. By using this shell-session mechanism, a portion of the available bandwidth on the edge QAM modulator is reserved for exclusive (albeit possibly temporary) use by the SDV (or other service) server. This allows the SRM to act as an arbitrator of edge QAM resource contention. It may seem redundant for a master controller (the SRM) to allocate shell sessions for management by a subservient controller (the SDV server). However, this capability is essential in the SDV application because of the real-time nature of SDV control traffic and the volume of this traffic that is generated during the course of ordinary TV viewing. The ability of multiple real-time sub-controllers to work within allocations of edge resource bandwidth relieves the master controller of the burden of time-critical high-volume tasks. This makes the system more reliable and more scalable while retaining the ability to share bandwidth dynamically among multiple services. When it is required to provide a program to a user, or users, in a particular group of set-tops or service group, the SDV server will select a shell session on a QAM modulator feeding that service group. By referring to the sessionID, the SDV server will command the QAM modulator to "bind" that session to a specific program content stream. The server references this content by its IP multicast address. It further provides a program number for use at the output of the QAM modulator and also logs the actual program bandwidth. Upon receiving session binding information for a multicast of which it is not already a member, the QAM modulator must send an IGMPv3 "membership report" requesting to "join" the specified multicast group. At this point, the edge switch-router receiving this request performs the actual switch of the content to the GigE port connected to that QAM modulator. The QAM modulator receives this content, "de-jitters" it, multiplexes it with other content on the specified carrier, and modulates the composite digital stream for transmission to the service group. Session-based QAM modulators enable full intra-carrier QAM sharing. Carriers can be designated as "shared" between multiple applications. The SRM will set up VOD sessions and/or shell sessions on a given QAM carrier upon request. The shell sessions on that carrier are then available for binding by the SDV server at the same time that VOD sessions are available on the same carrier. SRM The SDV system described here uses the Digital Storage Media Command and Control (DSM-CC) model of application-independent SRM. The DSM-CC SRM governs access to content and network resources and allows sharing of those resources by various applications. The SRM is considered part of the "Network" in the DSM-CC model, whereas application servers and client set-tops are "Users." In current VOD systems, session management and resource management are implemented as separate processes on one controller, the SRM. For VOD, the SRM directly receives requests from set-tops, negotiates directly with the VOD system, and allocates QAM bandwidth. For a mix of VOD and SDV, the SRM continues to function as the primary resource manager, but it dynamically apportions parts (sessions) of the bandwidth of an individual QAM RF carrier (intra-carrier QAM sharing) to the SDV server, which, for SDV purposes, communicates directly with the set-tops and performs bindings on the QAM shell sessions and bandwidth that it has been allocated. The SDV server uses an extension to the Time Warner Session Setup Protocol (SSP) to request, from the SRM, shell sessions on a QAM signal feeding a given service group. The SRM identifies available bandwidth on the service group QAM signal and provides shell-session space to the SDV server, thereby reserving bandwidth on the QAM modulator for exclusive use by the SDV server. In the reply from the SRM, the SDV server is given the control IP address of the QAM modulator so that the SDV server may directly control session bindings. Prior to granting QAM shell-session space (and thereby bandwidth) to the SDV server, the SRM sets up the actual shell sessions on the selected QAM modulator in order to prepare it for binding requests from the SDV server. The QAM modulator is not told what server may request these bindings since they may come from a primary or a backup SDV server. Since the SRM is the master bandwidth controller in the system, it may need to recover bandwidth previously assigned to the SDV server. It may do so by sending a bandwidth reclamation request to the SDV server for a specified service group. Upon receipt of such a request, the SDV server must initiate a QAM session teardown request for sufficient shell-session bandwidth to cover the reclamation. Note that description of resource sharing in this section has pertained to intra-carrier QAM sharing. Inter-carrier QAM sharing is simpler, but less bandwidth-efficient. In inter-carrier QAM sharing, the separate applications are allocated entire RF carriers; therefore, there is no possibility of requests from VOD and SDV "colliding." SDV Server If the content routing network is the switching "heart" of the switched multicast system, then the SDV server is the "brain." The SDV server is part session manager in that it directly receives and processes set-top channel change requests. It is also part resource manager in that, for its allocation of QAM shell sessions, it can bind and thus assign those to real programs for transmission to the service groups. The SDV server receives channel change requests for switched content from a set-top to bind that content to a session on a QAM modulator feeding that set-top’s service group, and responds to that set-top with the frequency and program number where that content may be found. The SDV server also fields channel change request messages for nonSDV broadcast channels in order to gather anonymous usage statistics and understand activity. The SDV server must anticipate spectrum demand for SDV and request shell sessions from the SRM before running out of available QAM bandwidth. In the absence of new shell sessions from the SRM, the SDV server must reallocate existing bandwidth in order to provide for active users at the expense of inactive ones. Conversely, if it recognizes that it is controlling bandwidth in excess of its anticipated requirements, the SDV server may initiate session teardown requests to the SRM in order to return the excess bandwidth to the total system pool. A central controller (global SRM) ideally arbitrates this give-and-take process. The SDV server generates a repeating (carousel) file containing a list of services currently streaming to each service group and the tuning parameters required to access them. This mini carousel serves as a redundant tuning mechanism and in some cases serves to improve channel change response time. The mini-carousel technique applies to any SDV system where the control path is independent of the media path or where the latency or reliability of interactive signaling may degrade while the media remain usable. Each service group requires a unique mini carousel, which may be replicated in each of the QAM RF carriers and/or may employ other delivery methods. This typically low-bandwidth (150 kbps) stream allows the set-top to tune already-streaming programs in the absence of two-way communication. This makes the SDV system very reliable in the face of impaired two-way connectivity and is critical for launch of SDV in the presence of nonresponding set-tops. The SDV server requires connection to both the control network, for communication with other network elements including the clients, and also to the content routing network in order to make the mini-carousel IP multicast available to the SDV client via the content-carrying QAM modulators. In order to prevent set-tops from accessing incorrect content due to outdated information in the mini carousel, the server must manage program numbers for SDV ensuring that no two programs share the same program number. SDV servers may be deployed in the headend or distributed at remote hubs. Since the multimedia content streams are independent of the SDV communications and control, centralizing the servers does not require separate content streams per service group. Unlike VOD, transport system cost does not appreciably increase based on SDV server location, as long as the switch-routers are distributed to the hubs. Manager and client The user interface for control of the SDV servers and system is in the SDV manager, which provides a means for operator configuration of the service group assignments and various settings for the SDV servers. The interface between the manager and servers is simple network management protocol (SNMP). In some implementations, SDV may be implemented as a software module on the same machine as the SRM. An advantage of this approach is that the service provider does not need to purchase or maintain an additional machine. An additional benefit is that when sessions are set up on the bulk encryptor to create an IP multicast stream for SDV, the SourceID-bit rate-multicast address information is automatically communicated from the SRM to the SDV manager so that the administrator does not need to enter this information twice. A potential disadvantage is that in some cases it may be more difficult to develop, test and deploy software revisions if the software is part of a larger system. The SDV client is a software component that should be fully integrated in the resident application (navigation guide) of an SDV-enabled set-top box in order to make the SDV service transparent to the user. The SDV client enables the set-top to communicate to the SDV server using the SDV Channel Change Message Interface Specification (CCM). Interfaces, features, next-gen The CCM is only one of several interfaces required in an open SDV system. Others concern the mini carousel, shell-session setup, session binding and server interactive session requests. As indicated earlier, more details on those interfaces, as well as on SDV operation and features, is contained within the longer paper from which this article is drawn. The elements discussed here comprise the core of an open architecture for delivery of SDV to the existing, installed based of MPEG-based set-tops. The architecture itself is an important element of a comprehensive next-generation architecture strategy (see Figure 3) and provides an important step from linear-broadcast delivery to a nonlinear, switched delivery model based on an IP core infrastructure.

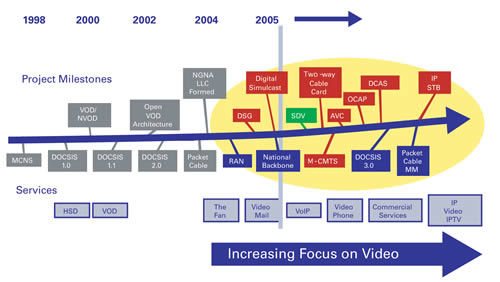

For encrypted switched streams, the bulk encryptor receives IP unicasts and generates and sources the IP multicasts. It may also be used to generate multicasts for unencrypted streams to simplify network management, if desired. The bulk encryptor is implemented as a network attached device, so it is possible to migrate some or all bulk encryption to the network edge in order to accommodate future ad-insertion zones, without significant impact to the network design. Content routing network The actual switching in the SDV switched multicast system occurs in a standards-based layer 3 routed network. Multimedia streams output by the staging processor are input to the routed network as SPTS IP multicasts or SPTS unicasts if bound for a bulk encryptor. Besides multimedia content, the content routing network may also carry IP multicast streams containing "mini-carousel" data, including server addresses and tuning information, which are generated by the SDV server. Since mini-carousel data is likely to be delivered in-band on the narrowcast QAM signals to each service group, it is necessary that the SDV server be connected to the content routing network. The network, or at least the edge switch-router, must support version 3 of the Internet Group Management Protocol (IGMPv3). This is especially important for source redundancy. Edge QAM modulator As in all HFC systems, the QAM modulator enables the transmission of a multiplex of digital streams via an RF carrier in an HFC spectrum channel. The digital streams may be composed of strictly MPEG transport packets or could contain IP packets as do QAM streams used for DOCSIS cable modem termination systems (CMTSs). The SDV system uses the QAM modulator to request (join) and terminate (leave) IP multicasts and to transmit programs as MPEG transport packets in RF. IP encapsulation is not used in the RF output of the QAM modulator. Note that because IP multicast is used in the "core" of the SDV network, it is a straightforward extension of the system to continue IP transport to the end user. This can be accomplished through a CMTS in HFC access networks or through another type of edge device for other access technologies. In the SDV system described here, the QAM modulator interfaces to an element manager for provisioning and control. Provisioning sets the output frequencies, modulation type and transport stream identifiers (TSIDs) on each output carrier and IP addresses of control and content ports. A control system or session and resource manager (SRM), which may be the same as the provisioning system, allocates QAM spectrum to various applications such as video on demand (VOD) and SDV. Spectrum may be statically or dynamically allocated to the applications on the basis of entire QAM carriers or even within QAM carriers. For any QAM carrier to be used for SDV, the modulator must support session-based input-to-output stream mapping, as opposed to table-based mapping. Upon request of the SDV server, the SRM allocates a number of "shell sessions" each with a session identifier (sessionID), a nominal bandwidth (capacity or throughput) and an RF carrier assignment. Content multicast address, program number and actual bandwidth are not yet specified at this point; hence the name "shell" session, implying that this session is an empty reservoir or pipe to be filled with content at a later time. By using this shell-session mechanism, a portion of the available bandwidth on the edge QAM modulator is reserved for exclusive (albeit possibly temporary) use by the SDV (or other service) server. This allows the SRM to act as an arbitrator of edge QAM resource contention. It may seem redundant for a master controller (the SRM) to allocate shell sessions for management by a subservient controller (the SDV server). However, this capability is essential in the SDV application because of the real-time nature of SDV control traffic and the volume of this traffic that is generated during the course of ordinary TV viewing. The ability of multiple real-time sub-controllers to work within allocations of edge resource bandwidth relieves the master controller of the burden of time-critical high-volume tasks. This makes the system more reliable and more scalable while retaining the ability to share bandwidth dynamically among multiple services. When it is required to provide a program to a user, or users, in a particular group of set-tops or service group, the SDV server will select a shell session on a QAM modulator feeding that service group. By referring to the sessionID, the SDV server will command the QAM modulator to "bind" that session to a specific program content stream. The server references this content by its IP multicast address. It further provides a program number for use at the output of the QAM modulator and also logs the actual program bandwidth. Upon receiving session binding information for a multicast of which it is not already a member, the QAM modulator must send an IGMPv3 "membership report" requesting to "join" the specified multicast group. At this point, the edge switch-router receiving this request performs the actual switch of the content to the GigE port connected to that QAM modulator. The QAM modulator receives this content, "de-jitters" it, multiplexes it with other content on the specified carrier, and modulates the composite digital stream for transmission to the service group. Session-based QAM modulators enable full intra-carrier QAM sharing. Carriers can be designated as "shared" between multiple applications. The SRM will set up VOD sessions and/or shell sessions on a given QAM carrier upon request. The shell sessions on that carrier are then available for binding by the SDV server at the same time that VOD sessions are available on the same carrier. SRM The SDV system described here uses the Digital Storage Media Command and Control (DSM-CC) model of application-independent SRM. The DSM-CC SRM governs access to content and network resources and allows sharing of those resources by various applications. The SRM is considered part of the "Network" in the DSM-CC model, whereas application servers and client set-tops are "Users." In current VOD systems, session management and resource management are implemented as separate processes on one controller, the SRM. For VOD, the SRM directly receives requests from set-tops, negotiates directly with the VOD system, and allocates QAM bandwidth. For a mix of VOD and SDV, the SRM continues to function as the primary resource manager, but it dynamically apportions parts (sessions) of the bandwidth of an individual QAM RF carrier (intra-carrier QAM sharing) to the SDV server, which, for SDV purposes, communicates directly with the set-tops and performs bindings on the QAM shell sessions and bandwidth that it has been allocated. The SDV server uses an extension to the Time Warner Session Setup Protocol (SSP) to request, from the SRM, shell sessions on a QAM signal feeding a given service group. The SRM identifies available bandwidth on the service group QAM signal and provides shell-session space to the SDV server, thereby reserving bandwidth on the QAM modulator for exclusive use by the SDV server. In the reply from the SRM, the SDV server is given the control IP address of the QAM modulator so that the SDV server may directly control session bindings. Prior to granting QAM shell-session space (and thereby bandwidth) to the SDV server, the SRM sets up the actual shell sessions on the selected QAM modulator in order to prepare it for binding requests from the SDV server. The QAM modulator is not told what server may request these bindings since they may come from a primary or a backup SDV server. Since the SRM is the master bandwidth controller in the system, it may need to recover bandwidth previously assigned to the SDV server. It may do so by sending a bandwidth reclamation request to the SDV server for a specified service group. Upon receipt of such a request, the SDV server must initiate a QAM session teardown request for sufficient shell-session bandwidth to cover the reclamation. Note that description of resource sharing in this section has pertained to intra-carrier QAM sharing. Inter-carrier QAM sharing is simpler, but less bandwidth-efficient. In inter-carrier QAM sharing, the separate applications are allocated entire RF carriers; therefore, there is no possibility of requests from VOD and SDV "colliding." SDV Server If the content routing network is the switching "heart" of the switched multicast system, then the SDV server is the "brain." The SDV server is part session manager in that it directly receives and processes set-top channel change requests. It is also part resource manager in that, for its allocation of QAM shell sessions, it can bind and thus assign those to real programs for transmission to the service groups. The SDV server receives channel change requests for switched content from a set-top to bind that content to a session on a QAM modulator feeding that set-top’s service group, and responds to that set-top with the frequency and program number where that content may be found. The SDV server also fields channel change request messages for nonSDV broadcast channels in order to gather anonymous usage statistics and understand activity. The SDV server must anticipate spectrum demand for SDV and request shell sessions from the SRM before running out of available QAM bandwidth. In the absence of new shell sessions from the SRM, the SDV server must reallocate existing bandwidth in order to provide for active users at the expense of inactive ones. Conversely, if it recognizes that it is controlling bandwidth in excess of its anticipated requirements, the SDV server may initiate session teardown requests to the SRM in order to return the excess bandwidth to the total system pool. A central controller (global SRM) ideally arbitrates this give-and-take process. The SDV server generates a repeating (carousel) file containing a list of services currently streaming to each service group and the tuning parameters required to access them. This mini carousel serves as a redundant tuning mechanism and in some cases serves to improve channel change response time. The mini-carousel technique applies to any SDV system where the control path is independent of the media path or where the latency or reliability of interactive signaling may degrade while the media remain usable. Each service group requires a unique mini carousel, which may be replicated in each of the QAM RF carriers and/or may employ other delivery methods. This typically low-bandwidth (150 kbps) stream allows the set-top to tune already-streaming programs in the absence of two-way communication. This makes the SDV system very reliable in the face of impaired two-way connectivity and is critical for launch of SDV in the presence of nonresponding set-tops. The SDV server requires connection to both the control network, for communication with other network elements including the clients, and also to the content routing network in order to make the mini-carousel IP multicast available to the SDV client via the content-carrying QAM modulators. In order to prevent set-tops from accessing incorrect content due to outdated information in the mini carousel, the server must manage program numbers for SDV ensuring that no two programs share the same program number. SDV servers may be deployed in the headend or distributed at remote hubs. Since the multimedia content streams are independent of the SDV communications and control, centralizing the servers does not require separate content streams per service group. Unlike VOD, transport system cost does not appreciably increase based on SDV server location, as long as the switch-routers are distributed to the hubs. Manager and client The user interface for control of the SDV servers and system is in the SDV manager, which provides a means for operator configuration of the service group assignments and various settings for the SDV servers. The interface between the manager and servers is simple network management protocol (SNMP). In some implementations, SDV may be implemented as a software module on the same machine as the SRM. An advantage of this approach is that the service provider does not need to purchase or maintain an additional machine. An additional benefit is that when sessions are set up on the bulk encryptor to create an IP multicast stream for SDV, the SourceID-bit rate-multicast address information is automatically communicated from the SRM to the SDV manager so that the administrator does not need to enter this information twice. A potential disadvantage is that in some cases it may be more difficult to develop, test and deploy software revisions if the software is part of a larger system. The SDV client is a software component that should be fully integrated in the resident application (navigation guide) of an SDV-enabled set-top box in order to make the SDV service transparent to the user. The SDV client enables the set-top to communicate to the SDV server using the SDV Channel Change Message Interface Specification (CCM). Interfaces, features, next-gen The CCM is only one of several interfaces required in an open SDV system. Others concern the mini carousel, shell-session setup, session binding and server interactive session requests. As indicated earlier, more details on those interfaces, as well as on SDV operation and features, is contained within the longer paper from which this article is drawn. The elements discussed here comprise the core of an open architecture for delivery of SDV to the existing, installed based of MPEG-based set-tops. The architecture itself is an important element of a comprehensive next-generation architecture strategy (see Figure 3) and provides an important step from linear-broadcast delivery to a nonlinear, switched delivery model based on an IP core infrastructure.  To address the existing installed base of MPEG set-tops, the described SDV architecture features an out-of-band signaling mechanism that is structurally consistent with the Packet Cable Multimedia (PCMM) and IP Multimedia Subsystem (IMS) architecture models for nonIP clients. However, the SDV architecture can be evolved to support IP-based clients and, in conjunction with the DOCSIS, PCMM and IMS architecture models, to provide a multi-service, multi-client architecture that enables "any source to any user" IP-based video delivery. The open architecture described here is designed to accelerate the development of technologies and solutions for delivery of SDV services over HFC networks; be more reliable, more cost-effective, and more scalable than existing architectures; and help evolve next generation networks to enhance cable operators’ competitiveness and position the cable industry for continued profitable growth. Luis Rovira is principal engineer and Lorenzo Bombelli is director, network architecture and strategy at Scientific-Atlanta; Paul Brooks is senior network architect at Time Warner Cable. They can be reached respectively at lu.rovira@sciatl.com, lorenzo.bombelli@sciatl.com and paul.brooks@twcable.com. This article is drawn from a paper delivered at the SCTE Conference on Emerging Technologies.

To address the existing installed base of MPEG set-tops, the described SDV architecture features an out-of-band signaling mechanism that is structurally consistent with the Packet Cable Multimedia (PCMM) and IP Multimedia Subsystem (IMS) architecture models for nonIP clients. However, the SDV architecture can be evolved to support IP-based clients and, in conjunction with the DOCSIS, PCMM and IMS architecture models, to provide a multi-service, multi-client architecture that enables "any source to any user" IP-based video delivery. The open architecture described here is designed to accelerate the development of technologies and solutions for delivery of SDV services over HFC networks; be more reliable, more cost-effective, and more scalable than existing architectures; and help evolve next generation networks to enhance cable operators’ competitiveness and position the cable industry for continued profitable growth. Luis Rovira is principal engineer and Lorenzo Bombelli is director, network architecture and strategy at Scientific-Atlanta; Paul Brooks is senior network architect at Time Warner Cable. They can be reached respectively at lu.rovira@sciatl.com, lorenzo.bombelli@sciatl.com and paul.brooks@twcable.com. This article is drawn from a paper delivered at the SCTE Conference on Emerging Technologies.