Docitive Networks – The Next Femto Evolution

The increased capital and operation expenses (capex and opex) placed on a carrier for Long-Term Evolution (LTE) 4G wireless technology can be staggering. However, smart choices when it comes to 4G technologies implemented can defray a good percentage of this burden and pay off in huge returns in the long run. One of the most promising 4G technologies on the horizon is Self Organizing Networks (SON) on femtocell micro-networks for such in-building or enclosed spaces as office buildings, stadiums and railroad stations.

In a SON, a new femtocell added to a micro-network actually learns from the other femtocells already on that network – optimizing its own implementation without requiring manual installation. The femtocells on a SON are in constant communication, and they autonomously can recognize and adapt to changing environmental situations, maintaining a high level of quality of service (QoS). Because there are multiple femtocells on the micro-network in communication, if one goes down, there are three or four nearby that become aware of this environmental change and instantly can pick up the lost coverage. Thus, a SON femtocell micro-network offers a robust solution with built-in redundancy.

The self-optimization of a SON simplifies remote management, thus reducing constant manual optimizing and RF engineer man-hours. While reducing opex, a SON femtocell micro-network also can open up potential revenue streams targeted to the consumers at that venue. For example, a football fan at a stadium can be sent a SMS, telling him that while he is watching the game, for $5, he will be sent updates on all the college scores.

‘Thinking’ Technology

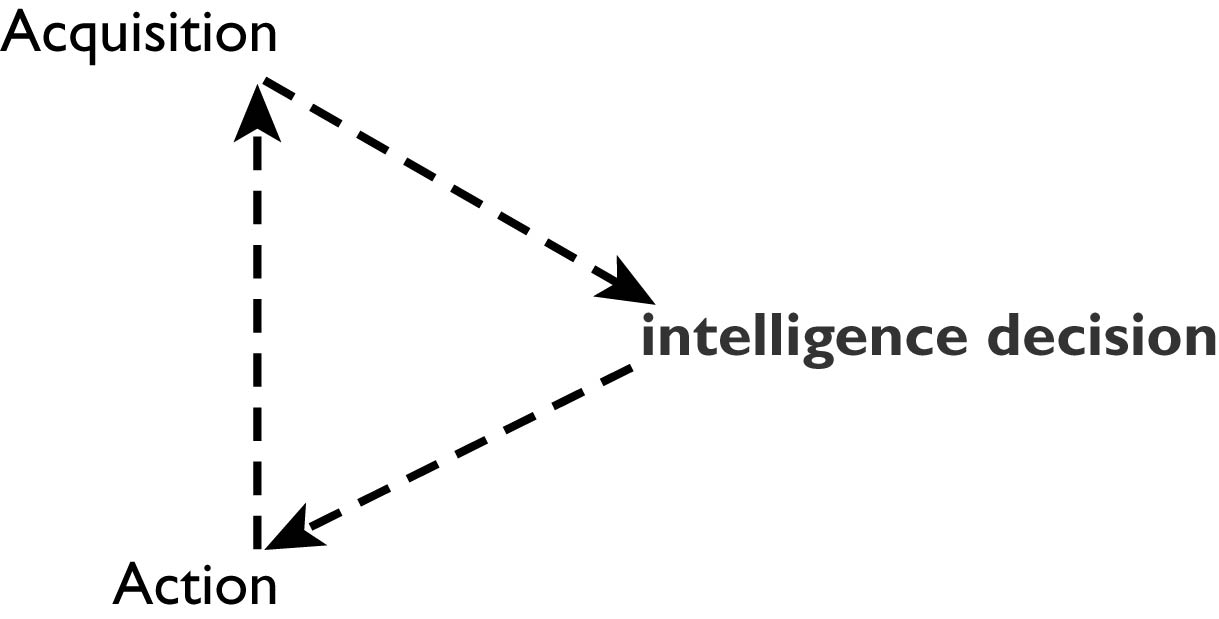

Autonomous management involves the capabilities of perception, knowing, remembering, thinking and judging problem-solving solutions as intelligent actions. The concept is based in Problem Based Learning (PBL), which develops a framework for observations aimed at significantly improving network performance. The Cognitive Radio Cycle (Figure 1) is an example of the observations and actions resulting in an intelligent decision.

The results from the PBL observations form a network that:

Shares observations, which develops a cooperating sensing environment.

The observations are shared to the multi agent femtocell network, which develops a machine learning environment.

The result ends with a distributed Artificial Intelligence community.

|

| FIGURE 1: Cognitive Radio Cycle |

|

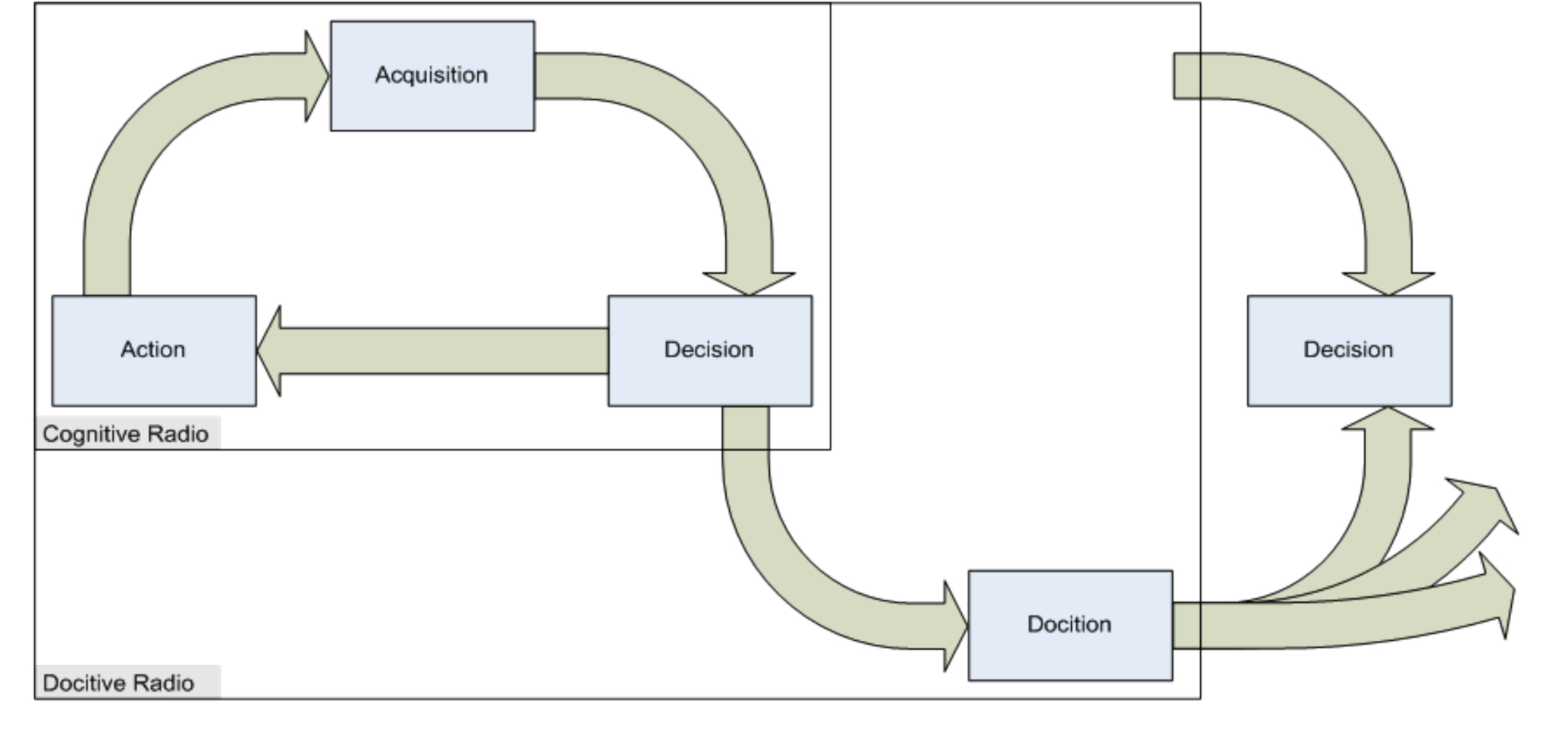

| FIGURE 2: Docitive System Cycle [2] |

The femtocell network consists of multiple agents. Each femtocell has no preliminary information, but is capable of solving interference problems. In addition, there is no central command and control of the agents.

Docition is realized by the femto agent using a paradigm comprising dissemination of information and propagation, which facilitates learning. The multi-femto agent network manages the signal-to-noise ratio received from the macro network. This information is required to provide seamless interoperability between the macro- and multi-femto agent network.

For networked femtocell agents, Q-Learning with a foundation in the Markov Decision Process (MDP) has been studied; this has a focus on independent learning and no convergence. The absence of convergence does have some drawbacks: slower learning cycles and some cyclic behaviors. To overcome these drawbacks, docition is used, whereby expert femto agents share knowledge with other less knowledgeable femto agents over the wireless medium, resulting in learned decision policies. From this concept, each femto agent learns from femto agents with greater expertness, operating in similar circumstances. There are two levels of docition to consider:

Startup Docition: Experienced femto agents teach their policies to new femto agents added to the network. Policies are shared among femto agents with similar operating conditions.

IQ-Driven Docition: Femto agents periodically share portions of their policies with less-expert femto agents with similar operating conditions. This is a continuation of improvement as femto agents experience different environments.

This novel approach for femto agents has shown to capitalize on advantages but, more importantly, it eliminates any drawbacks of SONs. Using a society-driven, pupil/teacher model applied to multi-femto agents networks has proved to bring out the advantages of self-regulated, self-optimizing networks that are more robust and capable of adapting to an ever-changing RF environment while still maintaining superior QoS.

Current Developments

The completed simulations have shown extraordinary results. By developing a properly designed learning process, several objectives are realized:

Desirable interference levels can be maintained at the macro user and thus in the macro capacity, independent of the density of femto agents and their operating patterns.

Total transmission power levels around the femto agents can be managed to low levels.

Docition applied at start-up and then continuously during operation improves convergence speeds and precision significantly.

The savings to operators that use self-optimizing networks can be realized as RF engineers manage additional networks and improve QoS to customers in high-traffic dense environments. This results in reduced churn, with the network doing all the work.

SON technology is undergoing formalized certification, targeted for availability in mid-2011.

Eric Moore is CTO at Axis Teknologies. Contact him at emoore@axisteknologies.com.

References:[1] Copyright 2010 BeFEMTO – Broadband Evolved FEMTO Networks

[2] Docitive Networks – An Emerging Paradigm for Dynamic Spectrum Management; Lorenza Giupponi, Ana Galindo-Serrano, Pol Blasco, Mischa Dohler