Digital Video Technologies

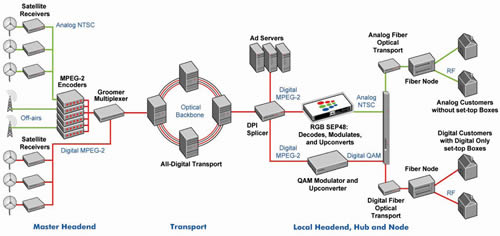

Last year, cable operators ramped up their use of advanced digital video processing. From encoding and multiplexing at the master headend to digital program insertion (DPI) in the metro and local areas to decoding and processing at the edge, cable’s technical teams got behind the wheel of new technologies and processes and put them through their paces. The rapid deployment of digital simulcast drove much of this activity. Maturing standards and cable’s growing Internet protocol (IP) infrastructure were other factors driving this rapid evolution of cable’s core service. Don’t expect this pace to slow. Advances in cable’s use of single program transport streams and what Time Warner Cable calls switched digital video (SDV) promise to increase the efficiency of the industry’s video networks and minimize competitive threats. For all that’s new, however, some of the underlying issues are evergreen, such as how to handle video content that varies in type and quality. Multiple sources Video comes to cable’s transmission networks in several ways. A predominant path involves satellite transmission via quadrature phase shift keying (QPSK). The rise of national IP backbones, such as the one that Comcast is building, promises to reduce the use of satellite transponders and increase the role of fiber links, another way that studios already deliver some video in metropolitan areas. Over-the-air and storage media account for the arrival of other video. Along with the range of video feeds comes a host of content characteristics, a point emphasized by encoder vendor EGT. "You’ve also got digital content from some providers where the bit rates are so high the cable operator can’t use it in its correct format, so you have to massage it first," says Chris Gordon, EGT’s director of product management. "You have all of these different types of content. Some of it is very clean and beautiful, and some of it is really noisy and has a lot of artifacts in it," he says. That content requires different kinds of care and feeding is well understood by cable operators. "You have different needs for, say, movies vs. talking heads vs. local channels, for instance," says Steve Watkins, Cox Communications’ director of digital video technology. "The nature of the community channel feed is such that it doesn’t require the high action and compression that ESPN or another sports package would." In particular, Cox found that because local community channels aren’t always broadcast quality, it had to do some additional filtering to maintain a quality picture. "While you may think you can use a less expensive encoder for these community channels, you may actually have to deploy a more expensive encoder to facilitate the noise that exists in those channels, which was counter-intuitive to how we thought it would be," Watkins says. Open and closed Cox isn’t offering details yet on its digital simulcast plans, but Adelphia started its digital simulcast deployment in the Los Angeles area and expected to complete 8-10 markets nationwide by early 2006. Such deployments have helped drive the demand for more efficient digital video processing. Closed-loop encoding, for example, has enabled Cox, Adelphia, Charter and the Comcast Media Center to leverage the efficiency of statistical multiplexing, which delivers more channels with better quality. The closed-loop system consists of multiple encoders, usually 12-14, interconnected to a single statistical multiplexer. "The advantage of that solution is the feedback loop between all encoded channels and the statistical multiplexer, which communicates real-time encoding information back to the encoders for improved video quality with very efficient bit rates," says Basil Badawiyeh, Adelphia’s manager of advanced video engineering and development. The closed statistical multiplexer looks at video bit rates and complexity information across all encoded content and compares the bits against each other. If there is a piece of content that can get by with fewer bits without impacting video quality, the multiplexer reassigns these bits to another piece of content that needs more. Then the statistical multiplexer gives that information back to the encoders, which then re-encode the content based on the new information. "The basic idea of closed loop encoding is the statistical multiplexer knows how many megabits per second it has to spend, and typically for cable that’s 38 Mbps," EGT’s Gordon says. "If I have 38 Mbps to spend and I have 50 channels, I’m going to give each of those megabits per second to each video differently based on what it needs at that given moment in time." Cable operators are also using open-loop encoding for some of their digital content, but in general use closed-loop encoding for premium, high-end channels. The difference between the two is that the open-loop encoder is usually a single task, analog on one side, or baseband, and MPEG-2 on the other side. Open-loop encoding is a one-pass type device that converts content into MPEG-2. Cox’s Watkins says the type of content dictates whether it heads to open or closed-loop encoding. "We do the logical," he says. "We don’t try to put a lot of sports into the same multiplex because of the demands you get when there’s a lot of action. We basically try to split the multiplexing across multiple channels, and in so doing we can range anywhere from eight up to 12 channels within a multiplex. That typically leaves you enough overhead to do the digital program insertion as well, and that gets factored into the overall multiplex environment." Centralized DPI As advances in digital video processing have accompanied cable’s growing regional and national IP infrastructure and the rise of other standards-based technologies, such as DPI, the overall combination has proven to be potent. Charter, for instance, launched its first digital simulcast in 2003 in Long Beach, CA. Components included a closed-loop statistical multiplexing system and variable bit rate (VBR) encoders from Harmonic, ad servers from C-COR (then nCUBE) and splicing gear from Terayon, all of which were SCTE-35 DPI cue-tone compliant. That was followed by simulcasts in Wisconsin and St. Louis. One of the ensuing benefits has been the ability to centralize DPI, says Charter’s Vice President of Advanced Engineering, Digital Video, Pragash Pillai. "As long as you have a knowledge of the equipment in an IP world, you can pretty much reach anywhere if you have IP transport and connectivity," Pillai said. "That’s the nice thing about deploying DPI over IP in a simultrans market. IP’s cost curve is going down quite a bit compared to traditional video products. By interfacing over IP, we have more centralizations, and it’s more cost effective." Through server centralization, Charter was able to reduce the capital expenditure by eliminating the need for the duplication of ad servers throughout the market. Once the server was centralized, Charter could also centralize the splicing or distribute it based on data capacity and connectivity in each market. Another advantage to using IP is the ability to hook up an encoder in one part of the country to a statistical multiplexer in another location, which wasn’t possible with asynchronous serial interface (ASI) because of its distance limitations. Pillai said Charter gained that capability in St. Louis last year. Edge decoding Once MPEG-2 digital video has been groomed, encoded, sent through a statistical multiplexer and had digital ads inserted, it can take one of two paths in a simulcast architecture. In the digital version of the broadcast channel, the programs are sent to a quadrature amplitude modulation (QAM) modulator and then out over the HFC network directly to digital set-top boxes. A potential second path is through an edge decoder where it’s decoded into analog, modulated and then upconverted to NTSC. RGB’s simulcast edge processor, which Adelphia selected for its digital simulcast rollout, combines these tasks in a one-rack-unit platform. (See Figure 1.)  Here is an another example of advanced video processing, very densely configured in this case, combining with a robust metro and wide area network (WAN) to create noteworthy advantages for cable operators. "The benefit of our box is that it allows cable operators to convert their regional area networks and optical backbones to an all-digital environment," says Adam Tom, RGB’s president and CEO. "(That) allows them greater efficiencies in operations and how they train their technical people by having only one set of transmission gear," he says. "They only need to have a digital set and not an analog and digital version, which is what they had prior to these simulcast and digital deployments." Digital still evolving The digital simulcast deployments that began last year were only the "first wave," to borrow from the title of a paper presented at the SCTE Conference on Emerging Technologies by Adephia’s Badawiyeh and BigBand Networks Director of Product Marketing Sylvain Riviere. (See sidebar at right.) Not only will the industry likely see more—and, more specifically, targeted—digital simulcast waves, but also lying offshore is an ocean of future digital innovations. Much of that future relates to the rise of national backbones and the evolution of video networks more toward an IP-based distribution system. Riviere envisions a future where single program transport streams (SPTSs), approximate to what now ensues from a video-on-demand (VOD) customer request, replace today’s multi-program transport streams (MPTSs). MPTS is the process of taking multiple video programs and multiplexing them as a static bundle for the sake of efficiency. Other than rate shaping, the MPTS stays bundled together. With SPTSs, more processing can be done to each program. "What is going on now is we’re moving away from this static environment to the notion of edge processing, which is called switching," Riviere says. "In other words, the video packets will now be irrelevant. They won’t talk to each other while re-stat-muxing. I’m going to take MPTS and convert it to SPTS, and that’s what we call clamping." Since most customers only view a small percentage of the number of channels available to them, SPTS would provide individualized delivery of content over a network that is more holistic in how it deals with the diversity of content, which is part of a larger trend of all-digital content and services. "The simulcasting that is going on today is sort of static," Riviere adds. "You’re just recreating a digital version of your analog programs, and then you’re pushing them out to all of your subscribers. Once the MPTS becomes SPTS, then the world is open to broadcasting not only over the set-top box, but over IP, over DOCSIS or even fiber-to-the-home." Since that is also the finish line toward which the industry’s once-again telco video competitors are racing, the switched video option may offer just the kind of turbo-charged technology it takes to win this race. Mike Robuck is associate editor to Communications Technology and the lead contributor to CT’s Video Report. Reach him at mrobuck@accessintel.com Sidebar 1 Compression Tales The Comcast Media Center in Denver is a one-stop shop for grooming and encoding digital signals, which are then sent on to Comcast systems or other cable operators. Gary Traver, CMC’s chief operating officer, says CMC is looking at a variety of approaches, such as advanced codecs, for future digital video delivery. He also thinks there are still some improvements to be made in compression technologies. "The state of compression today is still too much of an art form and not enough of a science," he says. "It can be very easy to underestimate how much work is required. There isn’t a plug-and-play solution for getting it right." Re-compressing the signal is better than rate shaping, according to Traver. He says there could be a compounding effect when rate shaping is used on an encoded signal. "We can achieve the same objective of reducing bandwidth (data rate) without that risk through recompression," he says. "A signal at lower bandwidth looks better than one rate shaped at a higher rate." While there are advancements on the compression side—Steve Watkins, Cox Communications’ director of digital video technology, says work on MPEG-4 is actually squeezing more out of MPEG-2—the obvious big leap of faith will take place when the current legacy set-top boxes are replaced. Network vendors already have unveiled if not deployed MPEG-4 products. EGT has ALTO, an H.264/MPEG-4 two-channel encoder. Terayon’s DM 6400 network CherryPicker supports the MPEG-4 format. And Harmonic’s MV 100 multi-codec encoding platform already has enabled the migration of an entire broadcast line from MPEG-2 to MPEG-4 at Video Networks Ltd., a U.K.-based service provider. Sidebar 2 Lessons Learned from Digital Simulcast The lessons learned from the first simulcast wave may be applicable to future waves. For example, the migration of many legacy analog services such as CE-GemStar guide data and Nielsen’s Automated Measurement of Lineup, AMOL I & II, along with some closed-captioning formats to digital carriage, required the creation or modification of supporting tools. For instance, SCTE-21 defines a popular and simple mechanism to carry vertical blanking interval (VBI) lines. Alternatively, extensions to the ETSI EN 301 775 standard are being formalized today that define digital carriage for a multitude of analog services such as AMOL & CE-GemStar in a manner concurrent with MPEG-2’s digital video structure over independent packet identifiers (PIDs). The carriage of messaging and triggering for emergency alert systems (EASs) has changed substantially from the legacy analog mechanisms. In a digital simulcast network, triggering continues to occur via a local ENDEC (encoder/decoder) in the analog RF domain. However, due to the end-to-end digital architecture, a digital mechanism via SCTE-18 is increasingly being used to trigger EAS edge decoders providing EAS alerts to digital-ready quadrature amplitude modulation (QAM) compatible TV sets. The flexibility of such recently defined standards provides a basic framework for the digital implementation of the analog services, but truly compliant translation of such services in most cases requires very detailed analysis of feature sets and equipment supported. Future simulcast waves can be more effectively implemented through thorough planning and analysis of the required standards development in advance of execution. Support for bulk "community cameras" had necessitated inefficient and analog-only solutions, since a local analog RF inserted video signal takes up an entire 6 MHz channel that could otherwise be used by 12 digital services. This hinders the transition to all-digital networks, requiring an effective solution. Historical lack of standards, solutions, strict regulation and consumer holdouts are proving difficult for achieving discontinuation of the legacy analog services. The creation and enforcement of standards is key to effective and efficient simulcast implementations and to successfully transitioning to the new technology as the wave subsides and prior practices are phased out. – From Badawiyeh and Riviere, "Cascading Simulcast Waves to Implement Advanced Technologies," SCTE Conference on Emerging Technologies, 2006.

Here is an another example of advanced video processing, very densely configured in this case, combining with a robust metro and wide area network (WAN) to create noteworthy advantages for cable operators. "The benefit of our box is that it allows cable operators to convert their regional area networks and optical backbones to an all-digital environment," says Adam Tom, RGB’s president and CEO. "(That) allows them greater efficiencies in operations and how they train their technical people by having only one set of transmission gear," he says. "They only need to have a digital set and not an analog and digital version, which is what they had prior to these simulcast and digital deployments." Digital still evolving The digital simulcast deployments that began last year were only the "first wave," to borrow from the title of a paper presented at the SCTE Conference on Emerging Technologies by Adephia’s Badawiyeh and BigBand Networks Director of Product Marketing Sylvain Riviere. (See sidebar at right.) Not only will the industry likely see more—and, more specifically, targeted—digital simulcast waves, but also lying offshore is an ocean of future digital innovations. Much of that future relates to the rise of national backbones and the evolution of video networks more toward an IP-based distribution system. Riviere envisions a future where single program transport streams (SPTSs), approximate to what now ensues from a video-on-demand (VOD) customer request, replace today’s multi-program transport streams (MPTSs). MPTS is the process of taking multiple video programs and multiplexing them as a static bundle for the sake of efficiency. Other than rate shaping, the MPTS stays bundled together. With SPTSs, more processing can be done to each program. "What is going on now is we’re moving away from this static environment to the notion of edge processing, which is called switching," Riviere says. "In other words, the video packets will now be irrelevant. They won’t talk to each other while re-stat-muxing. I’m going to take MPTS and convert it to SPTS, and that’s what we call clamping." Since most customers only view a small percentage of the number of channels available to them, SPTS would provide individualized delivery of content over a network that is more holistic in how it deals with the diversity of content, which is part of a larger trend of all-digital content and services. "The simulcasting that is going on today is sort of static," Riviere adds. "You’re just recreating a digital version of your analog programs, and then you’re pushing them out to all of your subscribers. Once the MPTS becomes SPTS, then the world is open to broadcasting not only over the set-top box, but over IP, over DOCSIS or even fiber-to-the-home." Since that is also the finish line toward which the industry’s once-again telco video competitors are racing, the switched video option may offer just the kind of turbo-charged technology it takes to win this race. Mike Robuck is associate editor to Communications Technology and the lead contributor to CT’s Video Report. Reach him at mrobuck@accessintel.com Sidebar 1 Compression Tales The Comcast Media Center in Denver is a one-stop shop for grooming and encoding digital signals, which are then sent on to Comcast systems or other cable operators. Gary Traver, CMC’s chief operating officer, says CMC is looking at a variety of approaches, such as advanced codecs, for future digital video delivery. He also thinks there are still some improvements to be made in compression technologies. "The state of compression today is still too much of an art form and not enough of a science," he says. "It can be very easy to underestimate how much work is required. There isn’t a plug-and-play solution for getting it right." Re-compressing the signal is better than rate shaping, according to Traver. He says there could be a compounding effect when rate shaping is used on an encoded signal. "We can achieve the same objective of reducing bandwidth (data rate) without that risk through recompression," he says. "A signal at lower bandwidth looks better than one rate shaped at a higher rate." While there are advancements on the compression side—Steve Watkins, Cox Communications’ director of digital video technology, says work on MPEG-4 is actually squeezing more out of MPEG-2—the obvious big leap of faith will take place when the current legacy set-top boxes are replaced. Network vendors already have unveiled if not deployed MPEG-4 products. EGT has ALTO, an H.264/MPEG-4 two-channel encoder. Terayon’s DM 6400 network CherryPicker supports the MPEG-4 format. And Harmonic’s MV 100 multi-codec encoding platform already has enabled the migration of an entire broadcast line from MPEG-2 to MPEG-4 at Video Networks Ltd., a U.K.-based service provider. Sidebar 2 Lessons Learned from Digital Simulcast The lessons learned from the first simulcast wave may be applicable to future waves. For example, the migration of many legacy analog services such as CE-GemStar guide data and Nielsen’s Automated Measurement of Lineup, AMOL I & II, along with some closed-captioning formats to digital carriage, required the creation or modification of supporting tools. For instance, SCTE-21 defines a popular and simple mechanism to carry vertical blanking interval (VBI) lines. Alternatively, extensions to the ETSI EN 301 775 standard are being formalized today that define digital carriage for a multitude of analog services such as AMOL & CE-GemStar in a manner concurrent with MPEG-2’s digital video structure over independent packet identifiers (PIDs). The carriage of messaging and triggering for emergency alert systems (EASs) has changed substantially from the legacy analog mechanisms. In a digital simulcast network, triggering continues to occur via a local ENDEC (encoder/decoder) in the analog RF domain. However, due to the end-to-end digital architecture, a digital mechanism via SCTE-18 is increasingly being used to trigger EAS edge decoders providing EAS alerts to digital-ready quadrature amplitude modulation (QAM) compatible TV sets. The flexibility of such recently defined standards provides a basic framework for the digital implementation of the analog services, but truly compliant translation of such services in most cases requires very detailed analysis of feature sets and equipment supported. Future simulcast waves can be more effectively implemented through thorough planning and analysis of the required standards development in advance of execution. Support for bulk "community cameras" had necessitated inefficient and analog-only solutions, since a local analog RF inserted video signal takes up an entire 6 MHz channel that could otherwise be used by 12 digital services. This hinders the transition to all-digital networks, requiring an effective solution. Historical lack of standards, solutions, strict regulation and consumer holdouts are proving difficult for achieving discontinuation of the legacy analog services. The creation and enforcement of standards is key to effective and efficient simulcast implementations and to successfully transitioning to the new technology as the wave subsides and prior practices are phased out. – From Badawiyeh and Riviere, "Cascading Simulcast Waves to Implement Advanced Technologies," SCTE Conference on Emerging Technologies, 2006.