Capacity Demands on Metro Area Networks:

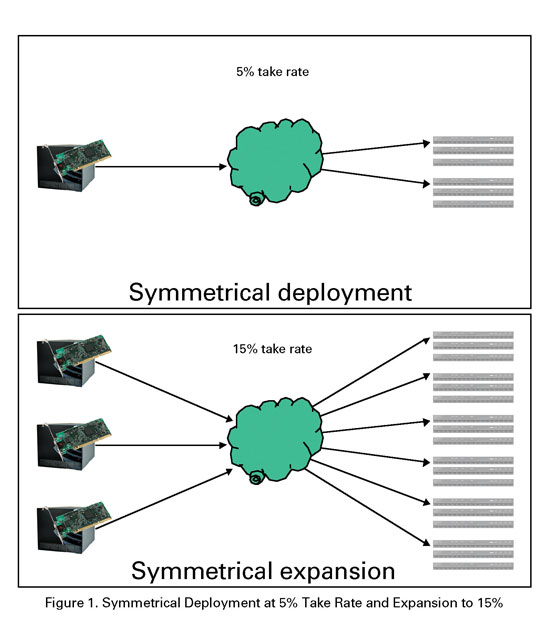

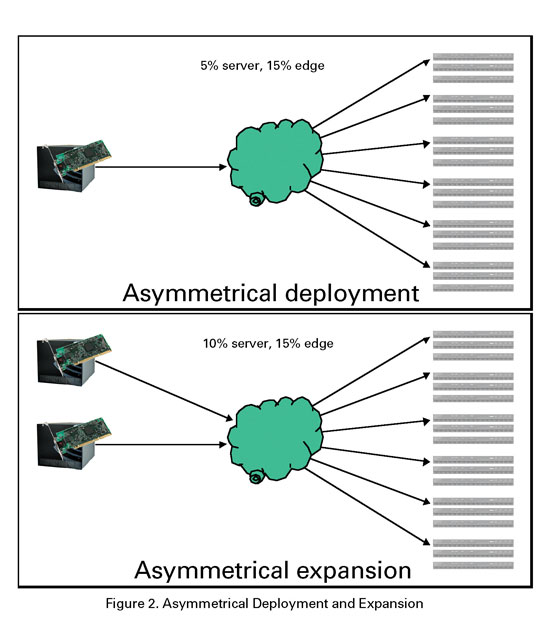

MSOs have been moving toward regional consolidation of systems, and leveraging economies of scale by centralizing operations. This metro area network (MAN) structure eliminates redundant hardware such as receivers and other equipment needed to generate broadcast cable feeds. However, it introduces a need for additional transport from the central headend to local sites to distribute these feeds. Increasingly, cable operators are turning to gigabit Ethernet (GigE) solutions to supply this additional transport. So, what is GigE? GigE refers to a group of specifications encompassing the first (physical) and second (data link) layers of the open system interconnection (OSI) network model, with a maximum data rate of 1 gigabit per second (Gbps). In plain English, GigE is a group of specifications for signaling methods over various types of cabling (physical media), as well as the translation of higher-level network messages into data frames that are sent using those methods, at speeds up to 1 Gbps. Why GigE? GigE is denser and less expensive than other competing interface formats and provides pervasive functionality such as switching and routing for use in its applications. The 1,000 Mbps capacity of GigE far exceeds the 214 Mbps limit of the competing Digital Video Broadcast Asynchronous Serial Interface (DVB-ASI), and outstrips both the 155 Mbps OC-3 and 622 Mbps OC-12 capacities often used by asynchronous transfer mode (ATM) equipment. However, GigE equipment generally is less expensive than its competitors using different interface formats. Equipment cost savings are available to suppliers as well as their customers. The IP-based protocols underlying GigE applications also can be encapsulated for use with ATM, synchronous optical network (SONET) and resilient packet ring (RPR) transport equipment. For example, this allows a SONET fiber ring at the maximum capacity of OC-48 (2.5 Gbps) to carry the traffic for multiple GigE links. GigE serves well as a high-density, high-capacity, standardized interface to today’s wide assortment of optical transport gear. GigE today The regional consolidation trend favors a centralized architecture. Centralized deployments generate GigE traffic in the master headend, and transport nonlocal traffic to hubs using optical technology. In this scenario, narrowcast services clearly will generate much more nonlocal traffic than broadcast services, but the inner workings of both are basically identical. The traffic is transmitted to edge devices, which receive the GigE data, remultiplex it and transform it into upconverted RF digital cable signals, suitable for transport and recombination with other RF signals such as analog cable channels. Expansion, service load distribution or transport network topology may make it desirable to supply certain hubs with application servers, to generate and distribute traffic like a headend, in addition to the normal GigE reception equipment. These sites perform a combination of the traditional headend and hub roles, and architectures using this type of design are referred to as hybrid architectures, because they are a mix of the standard centralized and distributed architectures. Asymmetric deployment Figures 1 and 2 show symmetric and asymmetric deployments and expansions.

GigE pervasive switching and routing functionality holds the promise of system deployment and asymmetric expansion. So, what does that actually mean? The use of the term "asymmetric" in this context refers to the balance of capacity between the traffic sources, such as video-on-demand (VOD) application servers and the traffic sinks, or destinations, such as edge devices. When a system initially is sized, the cable operator estimates the expected take-rate. This estimate is multiplied by the number of customers served, giving an estimate of the number of simultaneous streams required for initial deployment. In a symmetric deployment, the cable operator purchases equipment for the desired take-rate and its resultant stream count, for both traffic sources and sinks. Symmetric expansion adds equipment in a similar fashion, maintaining a balance between traffic sources and sinks. Why? Several observations illustrate the motivation behind asymmetric deployment and expansion. The effort required for equipment installation differs between traffic sources, which are primarily centralized, and traffic sinks, which are distributed. First, the installation of distributed equipment requires more truck rolls to hub sites than centralizing equipment. Second, the installation of edge devices, which must be connected to both GigE and RF networks, is more tedious than that of application servers, which only requires connection to the GigE network, irrespective of the particular location. Third, the probability of service disruption at installation is different for connection to the RF network (almost certain impairment or outage) than to the GigE network (probably transparent, because GigE switches usually can add new inputs and outputs without any disruption). Fourth, to satisfy a given stream count, many more edge devices than application servers are required. This fact exacerbates the differences resulting from the second and third points. These factors combine to drive the cable operator to consider installing application servers separately from edge devices, in an asymmetrical fashion. How? In an asymmetrical deployment, the cable operator selects, buys and installs a different amount of servers and edge devices. It seems prudent to install more edge devices than application servers at first, because costs and penalties of installation are higher for edge devices. The edge is over-provisioned to forestall further expansion and its associated liabilities. As a side benefit, the larger-volume purchase of edge devices affords the operator an opportunity for better pricing. Because the GigE network has pervasive switching and routing functionality, application servers can be configured to scatter their output across the various edge devices, which allows the cable operator to fine-tune its streaming capacity for a better match with local demand. This feature also can allow a forward-thinking operator to keep some reserve streaming capacity, to be configured for new destinations to address service demand peaks or failover conditions. The asymmetric approach allows improved amortization of installation expenses and more flexibility for apps like VOD. But, what are the weak points of the asymmetric approach? Buying more edge devices at the start provides some savings related to purchase volume, but locks in the price of the equipment before the combination of competition and new technology development can drive down prices. This approach also binds you more tightly to first generation equipment, which can pose difficulty if the ideal device configuration is not yet available. This is perhaps of most interest to cable operators with particular requirements for encryption, especially if the encryption schemes required are proprietary. The last point is not a weakness of the asymmetric approach, but a question of its applicability. GigE transmission systems often uses a centralized design initially. Evolving device functions Compressed video’s unforgiving nature can turn every dropped packet into a customer call. This makes buffering and queuing critically important, especially when implementing quality of service (QoS). Edge devices must perform a new buffering and dejittering process prior to multiplexing when receiving GigE input. Implementations that support advanced content, such as HD and variable bit rate (VBR) video, must become more widely available and affordable to facilitate the deployment of these services and their additional revenue for the operator. The use of encryption for VOD presents a dilemma for early adopters. Edge devices may offer integrated encryption, but compatibility with the customer’s existing equipment is an issue of great concern. Alternatively, you can envision moving the encryption function into a separate device, but this hasn’t happened, and does not alleviate the need for compatibility with existing equipment. The GigE transmission framework creates a need to manage the GigE network’s data throughput. If the transmission network is dedicated to one application, like VOD, or uses statically configured data throughput reservations, then throughput generally does not need to be tracked on a per-session basis. However, as we move toward dynamic session assignment, where the network paths for sessions can be reassigned, the association of a session, its network path and its data throughput reservation must be maintained. This may require changes at each link in the transmission chain, from application server to edge device. It’s unclear whether the current distribution of roles between devices is either technically or economically correct. It seems logical that because both switching and optical transport may require much higher internal bandwidth than other roles, a single device may perform both roles more cost-effectively. Movement in this direction already has begun. It also may make sense for the optical transport receiver in the hubs to forego unnecessary external connections to edge devices and absorb the edge device functionality, using a modular approach. Even if the roles for devices remain the same, there still exists a potential economic benefit if you use less expensive copper interfaces instead of optical ones for collocated equipment. If the distance in a hub from an optical transport receiver to its edge devices is generally less than 100 meters, copper interfaces ought to prove more cost-effective, unless the optical transport receiver never converts its optical signals to digital form. A similar example is between the application servers or switch in the headend and the optical transport transmitter. Again, unless the optical transport equipment is purely optical, the same economic benefit should be gained by using copper interfaces, and the savings will be multiplied because of the typically large number of ports involved at the headend. Configuration Initially, you must configure many new parameters, primarily IP and MAC addresses, for a GigE transmission network. This has been a source of aggravation for early adopters who had to input them manually. This is an error-prone process, so automated configuration tools bring huge time and labor savings. New methods of autoconfiguration and autodiscovery will allow these savings to extend to expansion and possibly failure scenarios. The regional centralization of systems brings centralized management, monitoring and remote diagnostics to the forefront. Fortunately, standards for information exchange are established, so integration with existing monitoring equipment, as well as new operations support systems (OSS) ought to be straightforward. Once the problem of resource management has been tackled, the next questions become: "Who are these resources being managed for?" and "Can those managed resources can be shared?" Operators would like to share resources between multiple vendors. This can happen at both the service level (e.g., servers and systems from multiple vendors) and the equipment level (e.g., interoperable devices from multiple vendors). In each case, standards for interoperability must exist. For network equipment, the necessary information is already somewhat standardized, but for application resource sharing, attempts at standardization are usually proprietary. Resources also can be shared at the application level-for instance between VOD services and DOCSIS traffic. Once equipped with a narrowcast-capable GigE transmission framework, cable operators also may want to provide telephony, interactive gaming and other data services within the same framework. QoS for these applications will in some cases dictate the amount of sharing possible, but if resource management is carefully structured, the effort required to accommodate these other services can be minimized. For this to happen, standard protocols to request and relinquish resources without bias toward any particular application must be devised. Complex topologies Centralization of headend services increases the risk of single-point failure for the operator. The establishment of multiple points of presence (POPs) to counteract this threat is necessary to provide the high reliability and availability expected of services such as telephony. All of these developments likely will lead to multiple valid network paths from point to point, and perhaps load-balancing or dynamic reassignment of these network paths in the midst a session. One-way vs. two-way Applications such as one-to-one videophone have obvious needs for bidirectional communication, but the majority of applications, such as VOD, take advantage of the unidirectional nature of the RF network. For these applications, unidirectional transport seems a big win, because it doubles the bandwidth capacity available to the applications. However, applications invariably use IP. Even when you specify the use of protocols (such as UDP) that are amenable to unidirectional communication, there is an unspoken but dangerous assumption that underlying protocols required for IP to function-such as ARP and the Internet control message protocol (ICMP)-also will work with a unidirectional link. However, this may be a bad assumption, and the capacity windfall quickly can become a configuration nightmare. But, a variety of proprietary, open and common-sense solutions are available to bring the nightmare under control. Down the road The advent of 10 GigE has been limited primarily to optical transport technology for two reasons. First, equipment costs are still at the high end of the curve. Second, few pieces of equipment are capable of doing much with 10 gigabits except to aggregate many 1 gigabit links-a task generally given to transport equipment. Looking at the trends of Ethernet capacity and pricing, you might speculate that 10 GigE will gain traction once the costs become tractable. However, a cautionary note: the application that requires an order-of-magnitude jump in data throughput capacity is difficult to identify. Even for the most promising case, HD VOD, technology advances in other fields such as video compression threaten to fulfill this demand without requiring significant additional capacity. For more on GigE, read Chen’s paper, "Evolving the Network Architecture of the Future: The Gigabit Ethernet Story" from SCTE’s Cable-Tec Expo ’03. Order the manual at www.scte. org/bookstore. Michael Chen is a senior systems engineer at the XSTREME Division of Concurrent Computer Corp. Email him at michael.chen@ccur.com. Bottom Line Gigabit Ethernet (GigE) technology is widely available, and its cost-effective nature enables the deployment of advanced services to provide cable operators with additional sources of revenue. Systems are using GigE today, and the convergence of several trends makes this likely to continue for the foreseeable future. New application features that leverage the underlying GigE structure will cement this advantage.

GigE pervasive switching and routing functionality holds the promise of system deployment and asymmetric expansion. So, what does that actually mean? The use of the term "asymmetric" in this context refers to the balance of capacity between the traffic sources, such as video-on-demand (VOD) application servers and the traffic sinks, or destinations, such as edge devices. When a system initially is sized, the cable operator estimates the expected take-rate. This estimate is multiplied by the number of customers served, giving an estimate of the number of simultaneous streams required for initial deployment. In a symmetric deployment, the cable operator purchases equipment for the desired take-rate and its resultant stream count, for both traffic sources and sinks. Symmetric expansion adds equipment in a similar fashion, maintaining a balance between traffic sources and sinks. Why? Several observations illustrate the motivation behind asymmetric deployment and expansion. The effort required for equipment installation differs between traffic sources, which are primarily centralized, and traffic sinks, which are distributed. First, the installation of distributed equipment requires more truck rolls to hub sites than centralizing equipment. Second, the installation of edge devices, which must be connected to both GigE and RF networks, is more tedious than that of application servers, which only requires connection to the GigE network, irrespective of the particular location. Third, the probability of service disruption at installation is different for connection to the RF network (almost certain impairment or outage) than to the GigE network (probably transparent, because GigE switches usually can add new inputs and outputs without any disruption). Fourth, to satisfy a given stream count, many more edge devices than application servers are required. This fact exacerbates the differences resulting from the second and third points. These factors combine to drive the cable operator to consider installing application servers separately from edge devices, in an asymmetrical fashion. How? In an asymmetrical deployment, the cable operator selects, buys and installs a different amount of servers and edge devices. It seems prudent to install more edge devices than application servers at first, because costs and penalties of installation are higher for edge devices. The edge is over-provisioned to forestall further expansion and its associated liabilities. As a side benefit, the larger-volume purchase of edge devices affords the operator an opportunity for better pricing. Because the GigE network has pervasive switching and routing functionality, application servers can be configured to scatter their output across the various edge devices, which allows the cable operator to fine-tune its streaming capacity for a better match with local demand. This feature also can allow a forward-thinking operator to keep some reserve streaming capacity, to be configured for new destinations to address service demand peaks or failover conditions. The asymmetric approach allows improved amortization of installation expenses and more flexibility for apps like VOD. But, what are the weak points of the asymmetric approach? Buying more edge devices at the start provides some savings related to purchase volume, but locks in the price of the equipment before the combination of competition and new technology development can drive down prices. This approach also binds you more tightly to first generation equipment, which can pose difficulty if the ideal device configuration is not yet available. This is perhaps of most interest to cable operators with particular requirements for encryption, especially if the encryption schemes required are proprietary. The last point is not a weakness of the asymmetric approach, but a question of its applicability. GigE transmission systems often uses a centralized design initially. Evolving device functions Compressed video’s unforgiving nature can turn every dropped packet into a customer call. This makes buffering and queuing critically important, especially when implementing quality of service (QoS). Edge devices must perform a new buffering and dejittering process prior to multiplexing when receiving GigE input. Implementations that support advanced content, such as HD and variable bit rate (VBR) video, must become more widely available and affordable to facilitate the deployment of these services and their additional revenue for the operator. The use of encryption for VOD presents a dilemma for early adopters. Edge devices may offer integrated encryption, but compatibility with the customer’s existing equipment is an issue of great concern. Alternatively, you can envision moving the encryption function into a separate device, but this hasn’t happened, and does not alleviate the need for compatibility with existing equipment. The GigE transmission framework creates a need to manage the GigE network’s data throughput. If the transmission network is dedicated to one application, like VOD, or uses statically configured data throughput reservations, then throughput generally does not need to be tracked on a per-session basis. However, as we move toward dynamic session assignment, where the network paths for sessions can be reassigned, the association of a session, its network path and its data throughput reservation must be maintained. This may require changes at each link in the transmission chain, from application server to edge device. It’s unclear whether the current distribution of roles between devices is either technically or economically correct. It seems logical that because both switching and optical transport may require much higher internal bandwidth than other roles, a single device may perform both roles more cost-effectively. Movement in this direction already has begun. It also may make sense for the optical transport receiver in the hubs to forego unnecessary external connections to edge devices and absorb the edge device functionality, using a modular approach. Even if the roles for devices remain the same, there still exists a potential economic benefit if you use less expensive copper interfaces instead of optical ones for collocated equipment. If the distance in a hub from an optical transport receiver to its edge devices is generally less than 100 meters, copper interfaces ought to prove more cost-effective, unless the optical transport receiver never converts its optical signals to digital form. A similar example is between the application servers or switch in the headend and the optical transport transmitter. Again, unless the optical transport equipment is purely optical, the same economic benefit should be gained by using copper interfaces, and the savings will be multiplied because of the typically large number of ports involved at the headend. Configuration Initially, you must configure many new parameters, primarily IP and MAC addresses, for a GigE transmission network. This has been a source of aggravation for early adopters who had to input them manually. This is an error-prone process, so automated configuration tools bring huge time and labor savings. New methods of autoconfiguration and autodiscovery will allow these savings to extend to expansion and possibly failure scenarios. The regional centralization of systems brings centralized management, monitoring and remote diagnostics to the forefront. Fortunately, standards for information exchange are established, so integration with existing monitoring equipment, as well as new operations support systems (OSS) ought to be straightforward. Once the problem of resource management has been tackled, the next questions become: "Who are these resources being managed for?" and "Can those managed resources can be shared?" Operators would like to share resources between multiple vendors. This can happen at both the service level (e.g., servers and systems from multiple vendors) and the equipment level (e.g., interoperable devices from multiple vendors). In each case, standards for interoperability must exist. For network equipment, the necessary information is already somewhat standardized, but for application resource sharing, attempts at standardization are usually proprietary. Resources also can be shared at the application level-for instance between VOD services and DOCSIS traffic. Once equipped with a narrowcast-capable GigE transmission framework, cable operators also may want to provide telephony, interactive gaming and other data services within the same framework. QoS for these applications will in some cases dictate the amount of sharing possible, but if resource management is carefully structured, the effort required to accommodate these other services can be minimized. For this to happen, standard protocols to request and relinquish resources without bias toward any particular application must be devised. Complex topologies Centralization of headend services increases the risk of single-point failure for the operator. The establishment of multiple points of presence (POPs) to counteract this threat is necessary to provide the high reliability and availability expected of services such as telephony. All of these developments likely will lead to multiple valid network paths from point to point, and perhaps load-balancing or dynamic reassignment of these network paths in the midst a session. One-way vs. two-way Applications such as one-to-one videophone have obvious needs for bidirectional communication, but the majority of applications, such as VOD, take advantage of the unidirectional nature of the RF network. For these applications, unidirectional transport seems a big win, because it doubles the bandwidth capacity available to the applications. However, applications invariably use IP. Even when you specify the use of protocols (such as UDP) that are amenable to unidirectional communication, there is an unspoken but dangerous assumption that underlying protocols required for IP to function-such as ARP and the Internet control message protocol (ICMP)-also will work with a unidirectional link. However, this may be a bad assumption, and the capacity windfall quickly can become a configuration nightmare. But, a variety of proprietary, open and common-sense solutions are available to bring the nightmare under control. Down the road The advent of 10 GigE has been limited primarily to optical transport technology for two reasons. First, equipment costs are still at the high end of the curve. Second, few pieces of equipment are capable of doing much with 10 gigabits except to aggregate many 1 gigabit links-a task generally given to transport equipment. Looking at the trends of Ethernet capacity and pricing, you might speculate that 10 GigE will gain traction once the costs become tractable. However, a cautionary note: the application that requires an order-of-magnitude jump in data throughput capacity is difficult to identify. Even for the most promising case, HD VOD, technology advances in other fields such as video compression threaten to fulfill this demand without requiring significant additional capacity. For more on GigE, read Chen’s paper, "Evolving the Network Architecture of the Future: The Gigabit Ethernet Story" from SCTE’s Cable-Tec Expo ’03. Order the manual at www.scte. org/bookstore. Michael Chen is a senior systems engineer at the XSTREME Division of Concurrent Computer Corp. Email him at michael.chen@ccur.com. Bottom Line Gigabit Ethernet (GigE) technology is widely available, and its cost-effective nature enables the deployment of advanced services to provide cable operators with additional sources of revenue. Systems are using GigE today, and the convergence of several trends makes this likely to continue for the foreseeable future. New application features that leverage the underlying GigE structure will cement this advantage.